This site is the archived OWASP Foundation Wiki and is no longer accepting Account Requests.

To view the new OWASP Foundation website, please visit https://owasp.org

Difference between revisions of "Conduct search engine discovery/reconnaissance for information leakage (OTG-INFO-001)"

(De-Googled text / Added comment about checking for error messages) |

|||

| Line 2: | Line 2: | ||

== Summary == | == Summary == | ||

| − | There are direct and indirect elements to | + | There are direct and indirect elements to search engine discovery and reconnaissance. Direct methods relate to searching the indexes and the associated content from caches. Indirect methods relate to gleaning sensitive design and configuration information by searching forums, newsgroups and tendering websites. |

| − | Once | + | Once a search engine robot has completed crawling, it commences indexing the web page based on tags and associated attributes, such as <TITLE>, in order to return the relevant search results. [1] |

| − | If the robots.txt file is not updated during the lifetime of the web site, then it is possible for web content not intended to be included in | + | If the robots.txt file is not updated during the lifetime of the web site, and inline HTML meta tags that instruct robots not to index content ahve not been used, then it is possible for indexes to contain web content not intended to be included in by the owners. Website owners may use the previously mentioned robots.txt, HTML meta tags, authentication and tools provided by search engines to remove such content. |

| − | |||

| − | |||

== Test Objectives == | == Test Objectives == | ||

| Line 21: | Line 19: | ||

* Logon procedures and username formats | * Logon procedures and username formats | ||

* Usernames and passwords | * Usernames and passwords | ||

| + | * Error message content | ||

| + | * Development, test, UAT and staging versions of the website | ||

=== Black Box Testing === | === Black Box Testing === | ||

| − | Using the advanced "site:" search operator, it is possible to restrict | + | Using the advanced "site:" search operator, it is possible to restrict search results to a specific domain [2]. Do not limit testing to just one search engine provider - they may generate different results depending on when they crawled content and their own algorithms. Consider: |

| + | |||

| + | * Baidu | ||

| + | * binsearch.info | ||

| + | * Bing | ||

| + | * Duck Duck Go | ||

| + | * ixquick/Startpage | ||

| + | * Google | ||

| + | * Shodan | ||

| + | |||

| + | Duck Duck Go and ixquick/Startpage provide reduced information leakage about the tester. | ||

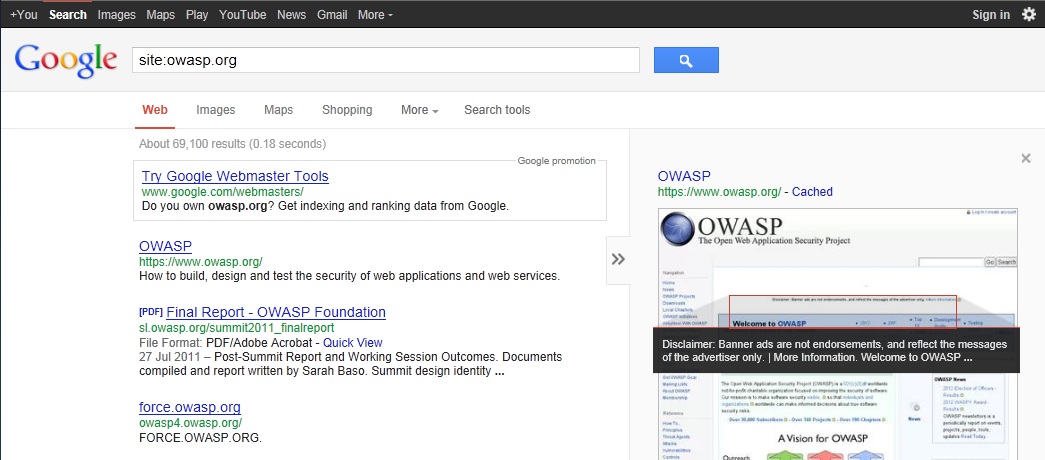

Google provides the Advanced "cache:" search operator [2], but this is the equivalent to clicking the "Cached" next to each Google Search Result. Hence, the use of the Advanced "site:" Search Operator and then clicking "Cached" is preferred. | Google provides the Advanced "cache:" search operator [2], but this is the equivalent to clicking the "Cached" next to each Google Search Result. Hence, the use of the Advanced "site:" Search Operator and then clicking "Cached" is preferred. | ||

| Line 30: | Line 40: | ||

==== Example ==== | ==== Example ==== | ||

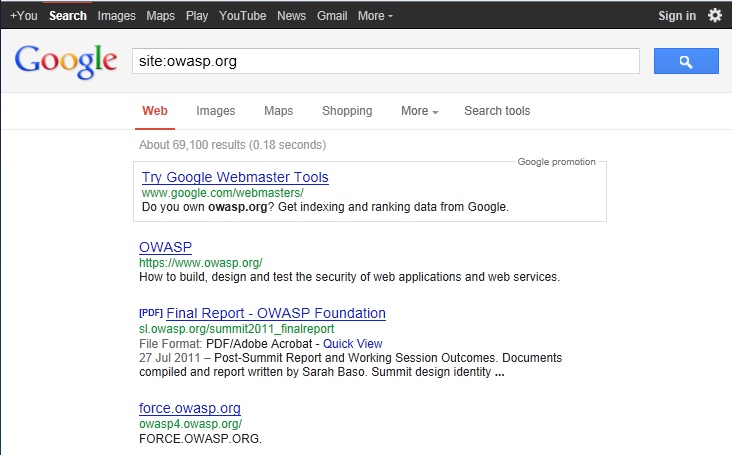

| − | To find the web content of owasp.org indexed by | + | To find the web content of owasp.org indexed by a typical search engine, the syntax required is: |

<pre> | <pre> | ||

site:owasp.org | site:owasp.org | ||

| Line 36: | Line 46: | ||

[[Image:Google_site_Operator_Search_Results_Example_20121219.jpg]] | [[Image:Google_site_Operator_Search_Results_Example_20121219.jpg]] | ||

| − | To display the index.html of owasp.org as cached | + | To display the index.html of owasp.org as cached, the syntax is: |

<pre> | <pre> | ||

cache:owasp.org | cache:owasp.org | ||

| Line 43: | Line 53: | ||

=== Gray Box testing and example === | === Gray Box testing and example === | ||

| − | + | Gray Box testing is the same as Black Box testing above. | |

== Tools == | == Tools == | ||

Revision as of 11:59, 15 October 2013

This article is part of the new OWASP Testing Guide v4.

Back to the OWASP Testing Guide v4 ToC: https://www.owasp.org/index.php/OWASP_Testing_Guide_v4_Table_of_Contents Back to the OWASP Testing Guide Project: https://www.owasp.org/index.php/OWASP_Testing_Project

Summary

There are direct and indirect elements to search engine discovery and reconnaissance. Direct methods relate to searching the indexes and the associated content from caches. Indirect methods relate to gleaning sensitive design and configuration information by searching forums, newsgroups and tendering websites.

Once a search engine robot has completed crawling, it commences indexing the web page based on tags and associated attributes, such as <TITLE>, in order to return the relevant search results. [1]

If the robots.txt file is not updated during the lifetime of the web site, and inline HTML meta tags that instruct robots not to index content ahve not been used, then it is possible for indexes to contain web content not intended to be included in by the owners. Website owners may use the previously mentioned robots.txt, HTML meta tags, authentication and tools provided by search engines to remove such content.

Test Objectives

To understand what sensitive design and configuration information is exposed of the application/system/organisation both directly (on the organisation's website) or indirectly (on a third party website)

How to Test

Using a search engine, search for:

- Network diagrams and configurations

- Archived posts and emails by administrators and other key staff

- Logon procedures and username formats

- Usernames and passwords

- Error message content

- Development, test, UAT and staging versions of the website

Black Box Testing

Using the advanced "site:" search operator, it is possible to restrict search results to a specific domain [2]. Do not limit testing to just one search engine provider - they may generate different results depending on when they crawled content and their own algorithms. Consider:

- Baidu

- binsearch.info

- Bing

- Duck Duck Go

- ixquick/Startpage

- Shodan

Duck Duck Go and ixquick/Startpage provide reduced information leakage about the tester.

Google provides the Advanced "cache:" search operator [2], but this is the equivalent to clicking the "Cached" next to each Google Search Result. Hence, the use of the Advanced "site:" Search Operator and then clicking "Cached" is preferred.

The Google SOAP Search API supports the doGetCachedPage and the associated doGetCachedPageResponse SOAP Messages [3] to assist with retrieving cached pages. An implementation of this is under development by the OWASP "Google Hacking" Project.

Example

To find the web content of owasp.org indexed by a typical search engine, the syntax required is:

site:owasp.org

To display the index.html of owasp.org as cached, the syntax is:

cache:owasp.org

Gray Box testing and example

Gray Box testing is the same as Black Box testing above.

Tools

[1] FoundStone SiteDigger - http://www.mcafee.com/uk/downloads/free-tools/sitedigger.aspx

[2] Google Hacker - http://yehg.net/lab/pr0js/files.php/googlehacker.zip

[3] Stach & Liu's Google Hacking Diggity Project - http://www.stachliu.com/resources/tools/google-hacking-diggity-project/

Vulnerability References

Web

[1] "Google Basics: Learn how Google Discovers, Crawls, and Serves Web Pages" - http://www.google.com/support/webmasters/bin/answer.py?answer=70897

[2] "Operators and More Search Help" - http://support.google.com/websearch/bin/answer.py?hl=en&answer=136861

Remediation

Carefully consider the sensitivity of design and configuration information before it is posted online.

Periodically review the sensitivity of existing design and configuration information that is posted online.