This site is the archived OWASP Foundation Wiki and is no longer accepting Account Requests.

To view the new OWASP Foundation website, please visit https://owasp.org

Difference between revisions of "Category:Summit 2011 Browser Security Track"

(Added link on how to visualize privacy through icons) |

(Added link to Google Group) |

||

| Line 2: | Line 2: | ||

Browser vendors attending so far: [[Image:Chrome_small.jpg]] [[Image:Firefox_small.jpg]] [[Image:Internet_explorer_small.jpg]] | Browser vendors attending so far: [[Image:Chrome_small.jpg]] [[Image:Firefox_small.jpg]] [[Image:Internet_explorer_small.jpg]] | ||

| + | |||

| + | [https://groups.google.com/group/owasp-summit-browsersec Join the Google Group for this track] today and get involved in planning, working forms etc. | ||

Welcome!<br> | Welcome!<br> | ||

Revision as of 01:05, 6 January 2011

The Browser Security track of the OWASP Summit 2011 is a community effort to bring together browser vendors, major web app providers, and OWASP leaders to discuss what can be done to enhance web security through the browser. The track comprises a full day of workshops on chosen subtopics (see below). We have invited some of the world's top experts to maximize the chances of moving forward this important area or application security.

Browser vendors attending so far:

Join the Google Group for this track today and get involved in planning, working forms etc.

Welcome!

/John Wilander, Session Chair

Virtualization and Sandboxing for Secure Multi-Domain Web Apps

Co-chair Dr Jasvir Nagra

Jasvir Nagra is a researcher and software engineer at Google. He is the designer of Caja - a secure subset of HTML, CSS and JavaScript; co-author of Surreptitious Software - a book on obfuscation, software watermarking and tamper-proofing, contributer to Shindig - the reference implementation of OpenSocial.

Co-chair Gareth Heyes

Gareth "Gaz" Heyes calls himself Chief Conspiracy theorist and is affiliated with Microsoft. He is the designer and developer behind JSReg – a Javascript sandbox which converts code using regular expressions; HTMLReg & CSSReg – converters of malicious HTML/CSS into a safe form of HTML. He is also one of the co-authors of Web Application Obfuscation: '-/WAFs..Evasion..Filters//alert(/Obfuscation/)-' – a book on how an attacker would bypass different types of security controls including IDS/IPS.

Subjects and Goals (draft)

Goals and issues that need browser vendor cooperation:

- Attenuated versions of existing apis to sandboxed code. How should browsers introduce new apis into the sandbox or allow the sandbox to provide attenuated versions of existing apis to sandboxed code? For example, lets say the sandbox wants to provide an attenuated "alert" function to sandboxed code which does something slightly different than the real "alert". What kind of apis could the browser provide to safely allow such extensions/apis? Do these need to be standardized such that different sandbox vendors can interoperate.

- Client side sandboxed apps maintaining state and authentication. For example if a user is created in a sandboxed app how is it determined what that user can do?

- Create a standard for modifying a sandboxed environment

- Deprecate and discourage standards which ambiently or undeniably pass credentials.

- Adopt a simpler rights amplification api like Web Introducer

- Create a standard for authentication within a sandboxed environment (maybe interfacing with existing auth without passing creds like 0Auth works)

Working Form

The working form will most probably be short presentations to frame the topic and then round table discussions. Depending on number of attendees we'll break into groups.

Enduser Warnings

Subjects and Goals (draft)

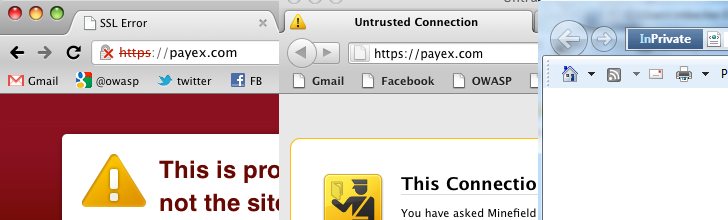

Clearly there is a need for warnings that users understand and that conveys the right information. Perhaps we can agree on some guidelines or at least exchange lessons learned.

- How should browsers signal invalid SSL certs to the enduser? Are we helping security right now? What to do about 50 % of users clicking through warnings? Mozilla replaces the padlock with a site identity button i Firefox 4. "Larry" will inform the user of the site's status. Google recently tried out a skull & bones icon for bad certs but moved back to padlocks again.

- How should browsers communicate other kinds of information such as privacy, malware warnings, "not visited before" etc? Forbes had an interesting example of how to visualize privacy.

New HTTP Headers

Are new opt-in HTTP headers the right way to add security features? For example:

- HTTP Strict Transport Security for enforced HTTPS (supported in Chrome 4, Firefox+NoScript, Firefox 4 and up)

- X-Frame-Options for non-framing (supported in IE8, FF3.6, Safari 4, Opera 10.5, Chrome 4 and up)

- Content Security Policy for whitelisting of script and media sources (supported in Firefox 4 and up)

Co-chair John Wilander

John Wilander is chapter co-leader in Sweden and ran the AppSec conference in Stockholm 2010. He is still pursuing his PhD in software security and works as an appsec consultant in media/banking/healthcare.

Co-chair Michael Coates

Michael Coates is a long-time OWASP contributor and leader, as well as a Mozilla employee. He leads the AppSensor and the TLS Cheat Sheet project.

Securing Plugins

Should browsers ship with default plugins? Should plugins be auto-updated? Can plugins or versions of plugins be blacklisted centrally?

Blacklisting

Can we cooperate better on blacklisting? Does it work between cultures, i e can we have the same process for reporting throughout the world?

OS Integration

More and more features in browsers get integrated with the underlying operating system. Processes, fonts, filesystem, 3D graphics. How do we secure this?

Sandboxed Tabs/Domains/Browser

Microsoft Research has been doing some groundbreaking work on the Gazelle browser, Chrome uses a sandboxing model, and the IronSuite provides sandboxed versions of Firefox (IronFox) and Safari on Mac OS X.

This category currently contains no pages or media.